How we set up Nginx FastCGI cache for WordPress on Laravel Forge

• 15 min read

How we set up Nginx FastCGI cache for WordPress on Laravel Forge

For our WordPress sites at vCore Digital, we wanted a caching setup that lived at the server layer, stayed predictable, and did not depend on a plugin doing all the heavy lifting.

That pushed us toward Nginx FastCGI cache.

It is not the only way to speed up WordPress on Forge. It is also not the easiest thing to get right the first time. But once the logic is in place, it gives you a clean, reusable setup that works well for brochure sites, marketing sites, and a lot of WooCommerce builds, provided you are careful about what should bypass cache.

This is the setup we use, why we use it, how to add it to a Laravel Forge server, and what to watch out for.

Why we chose FastCGI cache instead of a plugin-first setup

There are three common ways people usually approach WordPress caching:

- A full-page caching plugin.

- Object caching with Redis.

- Server-level page caching in Nginx.

We are not against plugins. We use them when they make sense. But for our own WordPress hosting stack on Laravel Forge, server-level caching is the most reliable way to speed up public pages and reduce unnecessary PHP work.

Here is the practical reason.

If a visitor is not logged in, is not in the cart or checkout flow, and is just requesting a normal page, we do not want PHP and WordPress rebuilding that page again and again. We want Nginx to serve the response immediately.

That gives us a few advantages:

- fewer PHP requests hitting PHP-FPM

- lower backend response times for anonymous traffic

- less plugin complexity inside WordPress

- simpler debugging through response headers like

X-CacheandX-Cache-Bypass-Reason

This does not replace everything else.

Redis object caching still helps WordPress build uncached pages more efficiently. Cloudflare still helps at the edge. But FastCGI cache is the layer that stops WordPress from doing work it should not be doing in the first place.

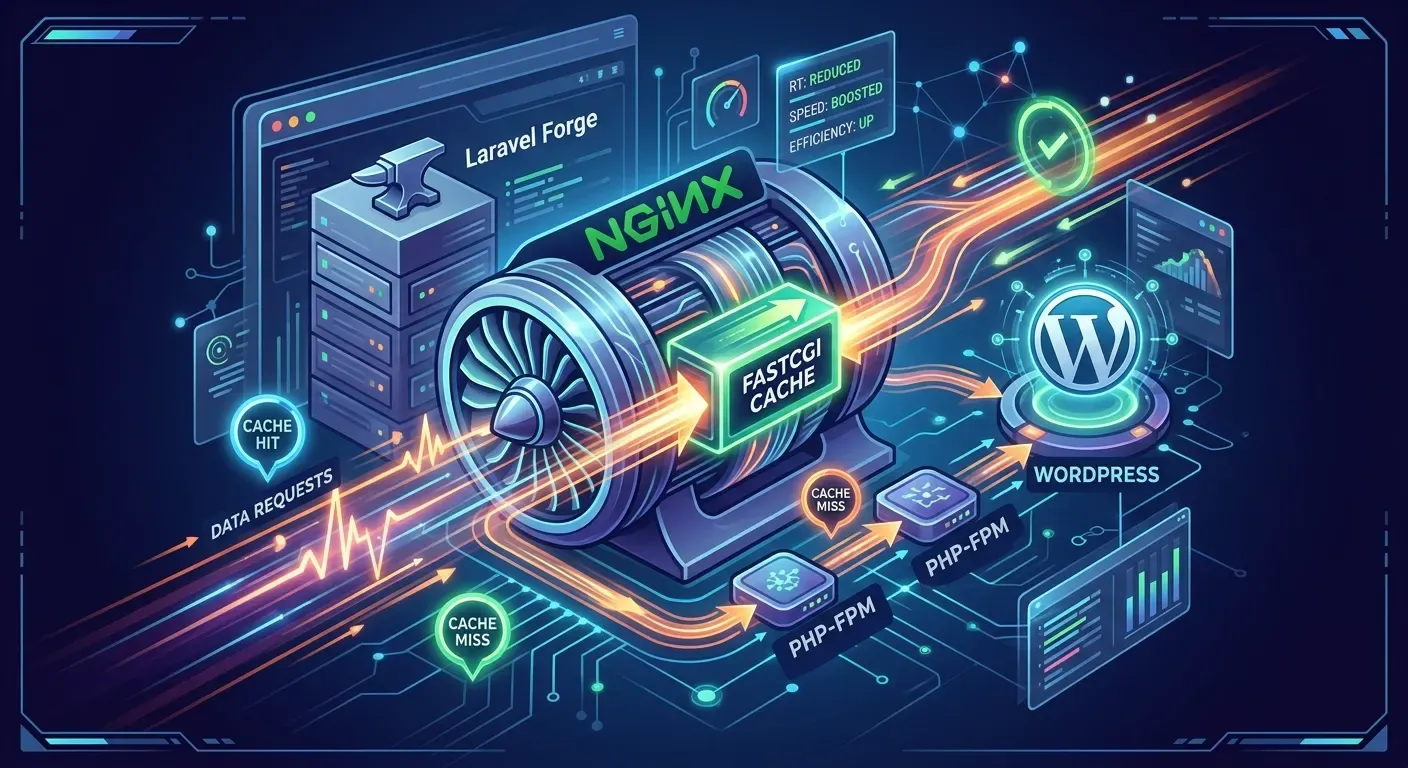

How FastCGI cache works in a Forge WordPress stack

At a high level, the flow looks like this:

- A request reaches Nginx.

- Nginx decides whether the request should bypass cache.

- If the request is cacheable and a cached response exists, Nginx serves it.

- If not, Nginx passes the request to PHP-FPM.

- WordPress generates the response.

- Nginx stores that response in the FastCGI cache for the next request.

The important thing to understand is this:

FastCGI cache is not browser cache, and it is not Cloudflare cache.

It is an origin-side response cache inside Nginx. That means it improves the server itself, even before you add any edge caching on top.

Why this instead of static HTML plugins or “Cache Everything” rules

We prefer this setup because it keeps the origin sane.

A plugin-based full-page cache can work, but it pushes more logic into WordPress itself. That is the same application stack we are trying to protect from unnecessary load.

A Cloudflare “Cache Everything” rule can also work, but it is a layer above the origin. It helps a lot, but it does not fix a weak origin setup.

For us, the better order is:

- Make the origin efficient.

- Make PHP-FPM less busy.

- Make WordPress skip work where possible.

- Then add Cloudflare edge caching on top.

That gives us better control and fewer surprises.

Tune the kernel TCP stack before touching Nginx

Before we changed the web-server config, we also reviewed the server’s TCP stack.

That matters because Nginx does not accept connections in isolation. Linux still has to handle SYN queues, accept backlogs, retransmits, and congestion control before Nginx serves a cached page. If those defaults are too conservative for your traffic pattern, you can lose time before PHP-FPM or FastCGI cache even come into play. The kernel docs explicitly tie somaxconn to the listen() backlog and point to tcp_max_syn_backlog as another related setting for TCP listeners.

SSH into the server first:

ssh forge@your-server-ipCreate a sysctl file for your Nginx-related network tuning:

sudo nano /etc/sysctl.d/99-nginx.confAdd this:

net.core.somaxconn = 65535

net.core.netdev_max_backlog = 250000

net.ipv4.tcp_max_syn_backlog = 262144

net.ipv4.tcp_fin_timeout = 15

net.ipv4.tcp_mtu_probing = 1

net.ipv4.tcp_slow_start_after_idle = 0

net.ipv4.tcp_congestion_control = bbrThen apply it:

sudo sysctl --systemWhat these settings do

net.core.somaxconn raises the cap on the socket listen backlog. On modern Linux kernels, the documented default is 4096. Raising it gives the server more room to absorb bursts of incoming connections before the accept queue fills up.

net.ipv4.tcp_max_syn_backlog raises the number of half-open TCP handshakes the kernel can track at once. The kernel docs specifically mention increasing it if a server is suffering overload from too many simultaneous connection attempts.

net.core.netdev_max_backlog increases the queue for incoming packets at the network-device layer. This is one of those settings that can help under short bursts, especially when the server is receiving packets faster than the kernel can process them immediately.

net.ipv4.tcp_fin_timeout reduces how long closed connections sit in FIN-WAIT-2. It is not a magic performance switch, but it can help keep connection cleanup more disciplined on busy boxes.

net.ipv4.tcp_mtu_probing = 1 enables MTU probing only when Linux detects a path-MTU problem. That makes it a reasonable defensive setting for public-facing servers rather than an aggressive always-on tweak.

net.ipv4.tcp_slow_start_after_idle = 0 tells Linux not to shrink the congestion window after idle periods. The kernel docs document the default RFC2861-style behaviour and this switch lets you opt out of it, which can help bursty web workloads.

net.ipv4.tcp_congestion_control = bbr switches the server to BBR if the kernel supports it. That can improve latency and throughput on many networks, but it should be verified rather than assumed. Linux exposes the available congestion-control algorithms directly.

Check whether BBR is available:

sysctl net.ipv4.tcp_available_congestion_controlIf bbr appears in the list, you are good to go. If not, leave the congestion-control setting alone.

One important caveat

Kernel backlog tuning is only half the story. Nginx still has its own listener settings and worker limits. Raising somaxconn on its own does not magically fix a server if the Nginx side is still configured too conservatively. That is why we treat this as a foundation step, not the whole optimization.

The global cache zone we define first

Now we need to set up the cache path and the global cache zone.

That matters because the site-level config will reference a cache zone called WORDPRESS. If that zone does not already exist at the global Nginx level, the site config will not work.

On our Forge servers, the process usually looks like this:

1. Create the cache path and set permissions

sudo mkdir -p /var/cache/nginx/wordpress

sudo chown -R www-data:www-data /var/cache/nginxThat gives Nginx a dedicated cache directory it can actually write to.

2. Define the cache zone globally

We then create a separate Nginx config file loaded from the http {} context. On Forge, we usually do that in:

/etc/nginx/conf.d/fastcgi-cache.confWith this content:

fastcgi_cache_path /var/cache/nginx/wordpress levels=1:2 keys_zone=WORDPRESS:100m inactive=60m max_size=1g;

fastcgi_cache_key "$scheme$request_method$host$request_uri";Then test and reload Nginx:

sudo nginx -t && sudo systemctl reload nginxWhat this does:

/var/cache/nginx/wordpressis the cache directoryWORDPRESSis the name of the shared cache zone100mis the metadata zone sizeinactive=60mremoves entries not used for an hourmax_size=1gcaps disk usagefastcgi_cache_key "$scheme$request_method$host$request_uri";defines how cached responses are keyed

That cache key is important. It tells Nginx to cache based on the request scheme, method, host and URI. In other words, a normal GET request to one page URL becomes one cache entry.

If you want to experiment with a RAM-backed cache directory, you can. We tested that too, but did not see any noticeable difference.

The cache rules we use for WordPress and WooCommerce

For WordPress, the hardest part is not turning caching on.

The hardest part is deciding what not to cache.

These are the rules we use:

set $skip_cache 0;

set $bypass_reason "";

# Skip cache for POST or query-string requests

if ($request_method = POST) {

set $skip_cache 1;

set $bypass_reason "POST request";

}

if ($is_args) {

set $skip_cache 1;

set $bypass_reason "Query string";

}

# Maintenance mode

if (-f "$document_root/.maintenance") {

set $skip_cache 1;

set $bypass_reason "Maintenance mode";

}

# Skip sensitive/admin URLs

if ($request_uri ~* "/wp-admin/|/xmlrpc.php|/wp-json|/rest_route=|wp-.*\.php|/feed/|index.php|sitemap(_index)?\.xml") {

set $skip_cache 1;

set $bypass_reason "Special URL";

}

# Logged-in cookies

if ($http_cookie ~* "wordpress_logged_in|comment_author|wp-postpass|wordpress_no_cache") {

set $skip_cache 1;

set $bypass_reason "Logged-in cookie";

}

# WooCommerce-like routes

if ($request_uri ~* "/cart|/checkout|/my-account|/addons") {

set $skip_cache 1;

set $bypass_reason "Ecommerce path";

}

if ($cookie_woocommerce_items_in_cart = "1") {

set $skip_cache 1;

set $bypass_reason "Cart not empty";

}

location ~ \.php$ {

fastcgi_split_path_info ^(.+\.php)(/.+)$;

# Normal PHP wiring

include fastcgi_params;

fastcgi_pass unix:/var/run/php/php8.3-fpm-vanyukov.sock;

fastcgi_index index.php;

fastcgi_param SCRIPT_FILENAME $realpath_root$fastcgi_script_name;

# FastCGI cache

fastcgi_cache WORDPRESS;

fastcgi_cache_bypass $skip_cache;

fastcgi_no_cache $skip_cache;

fastcgi_cache_valid 200 301 302 1m;

fastcgi_cache_use_stale error timeout invalid_header updating http_500 http_503;

add_header X-Cache $upstream_cache_status always;

add_header X-Cache-Bypass-Reason $bypass_reason always;

add_header X-Cache-Enabled "true" always;

add_header X-Upstream-Cache $upstream_cache_status always;

add_header X-Upstream-RT $upstream_response_time always;

add_header X-Req-RT $request_time always;

}This is the exact pattern we ended up using on our own stack.

Why we skip query strings

This is the one some people will argue with.

And fair enough.

A strict if ($is_args) rule means any request with a query string bypasses cache. That includes perfectly harmless URLs like:

/?utm_source=google/?fbclid=.../?ref=something

So why keep it?

Because for a general WordPress and WooCommerce server template, we would rather be conservative than clever.

Once you start selectively caching query-string URLs, you need to be very sure which parameters are safe and which are not. Search pages, previews, filtered results, campaign landing variations, and plugin-driven behaviour can all get messy quickly.

For a broad WordPress hosting baseline, we prefer the safer rule.

If you know your stack well and want to relax it later, you can.

Why the PHP block is where the cache lives

One mistake we see often is trying to move FastCGI cache directives into location / because it looks cleaner.

In a Forge-generated WordPress site, that usually causes confusion or broken behaviour.

We keep the regular location / block intact:

location / {

try_files $uri $uri/ /index.php?$query_string;

}Then we put the FastCGI cache directives in the PHP location block that already talks to PHP-FPM.

That keeps the Forge structure familiar and avoids fighting the way the config is already laid out.

How we add it to a Forge site

A normal Forge PHP site template usually looks roughly like this:

ssl_protocols TLSv1.2 TLSv1.3;

ssl_ciphers ECDHE-ECDSA-AES128-GCM-SHA256:ECDHE-RSA-AES128-GCM-SHA256:ECDHE-ECDSA-AES256-GCM-SHA384:ECDHE-RSA-AES256-GCM-SHA384:ECDHE-ECDSA-CHACHA20-POLY1305:ECDHE-RSA-CHACHA20-POLY1305:DHE-RSA-AES128-GCM-SHA256:DHE-RSA-AES256-GCM-SHA384;

ssl_prefer_server_ciphers off;

ssl_dhparam /etc/nginx/dhparams.pem;

add_header X-Frame-Options "SAMEORIGIN";

add_header X-XSS-Protection "1; mode=block";

add_header X-Content-Type-Options "nosniff";

index index.html index.htm index.php;

charset utf-8;

# FORGE CONFIG (DO NOT REMOVE!)

include forge-conf/{{ SITE_ID }}/server/*;

location / {

try_files $uri $uri/ /index.php?$query_string;

}

location = /favicon.ico { access_log off; log_not_found off; }

location = /robots.txt { access_log off; log_not_found off; }

access_log off;

error_log /var/log/nginx/{{ SITE_ID }}-error.log error;

error_page 404 /index.php;

location ~ \.php$ {

fastcgi_split_path_info ^(.+\.php)(/.+)$;

fastcgi_pass {{ PROXY_PASS }};

fastcgi_index index.php;

include fastcgi_params;

}

location ~ /\.(?!well-known).* {

deny all;

}That is the same Forge structure we used as the basis for our own integration.

To add FastCGI cache, we do three things:

- keep the regular

location /block - insert the skip logic above the PHP block

- replace the plain PHP block with the cache-aware one

That way we are not fighting Forge. We are extending it.

How to use Forge Nginx Templates for all new sites

If you only have one or two sites, you can patch the config manually.

If you plan to keep building WordPress sites on the same server pattern, that gets old fast.

Forge’s Nginx Templates feature is the right way to standardise this. Forge lets you create custom Nginx templates from the server dashboard, and those templates are used when you create new sites. Editing a template later does not change existing sites automatically, so treat it as a baseline for future sites, not a retroactive migration tool. Forge also warns that invalid templates can break Nginx and affect existing sites, so this is not something to edit casually on a production server.

Our approach is simple:

- take the default Forge PHP template

- leave the overall structure intact

- add the skip-logic section before

location / - replace the default PHP block with the cache-aware PHP block

- use Forge template variables like

{{ SITE_ID }}and{{ PROXY_PASS }}instead of hardcoding sockets or IDs, because Forge exposes those variables specifically for use in templates.

If you are going to do this, test it on one non-critical site first.

Do not assume the template is correct just because it renders.

Minor Nginx tweaks we also made

On top of the cache rules, we made a few small Nginx changes.

Nothing exotic.

Just the basics:

events {

use epoll;

worker_connections 8192;

}And inside http {}:

tcp_nodelay on;That is not a giant tuning checklist. It is just the minimal set of changes we actually kept after testing.

How we test whether the cache is really working

We do not guess.

We look at headers.

A normal anonymous page should behave like this:

First request:

curl -I https://example.com/Second request:

curl -I https://example.com/What we want:

- first request:

X-Cache: MISS - second request:

X-Cache: HIT

The extra debug headers matter too:

X-Cache-Bypass-Reasontells us why a request skipped cacheX-Upstream-RTtells us how long the backend tookX-Req-RTtells us the overall request time

On a real cache HIT, X-Req-RT should be tiny.

When we were testing this setup, we also saw cases where a request was a confirmed cache HIT and origin-side request time was effectively zero, while the overall benchmark was still much slower because the test was coming from the other side of the world. That matters. If your load test runs from US-East and your origin is in Australia, a decent chunk of the result is just physics and network path, not Nginx.

So when you benchmark this setup, separate:

- origin processing time

- cache hit rate

- network and TLS overhead

Otherwise you will blame the wrong thing.

The PHP-FPM pool tuning we pair with it

FastCGI page cache helps a lot, but it is not the whole picture.

We also hit the usual PHP-FPM warning on a Forge server running multiple WordPress sites:

WARNING: [pool vcore] seems busy … there are 0 idle …

Our original pool settings were:

pm = dynamic

pm.max_children = 20

pm.start_servers = 2

pm.min_spare_servers = 1

pm.max_spare_servers = 3That is conservative enough to look sensible on paper, but still too tight for bursty WordPress traffic.

The problem is not always CPU.

The problem is concurrency inside the pool.

A WordPress site can have low average CPU usage and still run out of immediately available PHP workers when several requests arrive together. Admin AJAX, cron, background tasks, REST calls, and plugin behaviour all contribute to that.

We were testing on a 2 GB RAM, 1 vCPU premium AMD server with three WordPress sites, low CPU use, and around 50% memory use. Our recommended starting point for that sort of box is:

pm = dynamic

pm.max_children = 30

pm.start_servers = 4

pm.min_spare_servers = 4

pm.max_spare_servers = 8

pm.max_requests = 500Then reload PHP-FPM.

That gives the pool more breathing room and reduces the lag caused by constantly spawning children from an unrealistically low baseline.

This is not a universal magic config. It is a starting point.

But it is a much more realistic one for a small Forge server hosting several WordPress sites.

Why FastCGI cache, PHP-FPM tuning, and kernel TCP tuning belong together

Because they solve different parts of the same request path.

Kernel TCP tuning affects what happens before Nginx even starts serving the request. It helps the server deal with bursts of incoming connections, listener backlogs, and queue pressure more cleanly.

FastCGI page cache reduces how many requests reach PHP at all. If the response can be served from Nginx cache, PHP-FPM does not need to do the work.

PHP-FPM pool tuning improves how PHP behaves when requests do reach it. That matters for logged-in users, WooCommerce flows, uncached pages, and the first request that populates the cache.

If you only tune one layer, you usually still leave performance on the table.

A server with good PHP-FPM settings but no page cache still wastes PHP workers on public traffic. A server with page cache but badly tuned pools can still struggle on cache misses, admin traffic, and dynamic requests. A server with both of those in place can still hit avoidable connection bottlenecks if the kernel-side network defaults are too conservative for the traffic pattern.

That is why we treat this as a stack-level setup rather than a single Nginx tweak.

When this setup is the right choice

We like this setup for:

- brochure sites

- service business sites

- content-heavy WordPress sites

- WooCommerce sites, if the skip rules are handled carefully

- teams who want server-side control instead of plugin-heavy caching stacks

When this setup is not the right choice

We would be more cautious if:

- the site has highly personalised output for anonymous visitors

- query-string-based behaviour is core to the app

- the site is mostly an application, not mostly content

- someone on the team is likely to edit Nginx casually without testing first

FastCGI cache is powerful, but it is not forgiving if you cache the wrong thing.

Final thoughts

For our WordPress sites at vCore Digital, this is the setup we keep coming back to.

Not because it is the most clever. Not because it is the shortest. And not because it gives us the prettiest config file.

We keep using it because it is practical, predictable, and easy to reason about when something goes wrong.

That matters more than most people admit.

The short version is simple:

- tune the kernel TCP stack sensibly before traffic reaches Nginx

- define a global FastCGI cache zone

- keep Forge’s normal location / block

- add WordPress-aware skip rules

- put the FastCGI cache directives in the PHP location

- expose cache headers so debugging is obvious

- pair it with more realistic PHP-FPM pool limits

- use Forge Nginx Templates so new sites start from the same baseline

That gives us a stack that is fast under public traffic, stable under bursts, and still understandable six months later.

For us, that is the real goal. Better performance, fewer moving parts, and less guesswork when a site is under load.

Back to blog